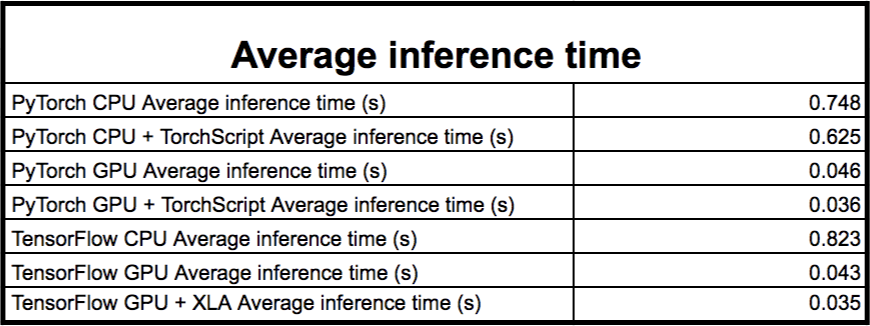

How to PyTorch in Production. How to avoid most common mistakes in… | by Taras Matsyk | Towards Data Science

PyTorch on X: "4. ⚠️ Inference tensors can't be used outside InferenceMode for Autograd operations. ⚠️ Inference tensors can't be modified in-place outside InferenceMode. ✓ Simply clone the inference tensor and you're

TorchServe: Increasing inference speed while improving efficiency - deployment - PyTorch Dev Discussions

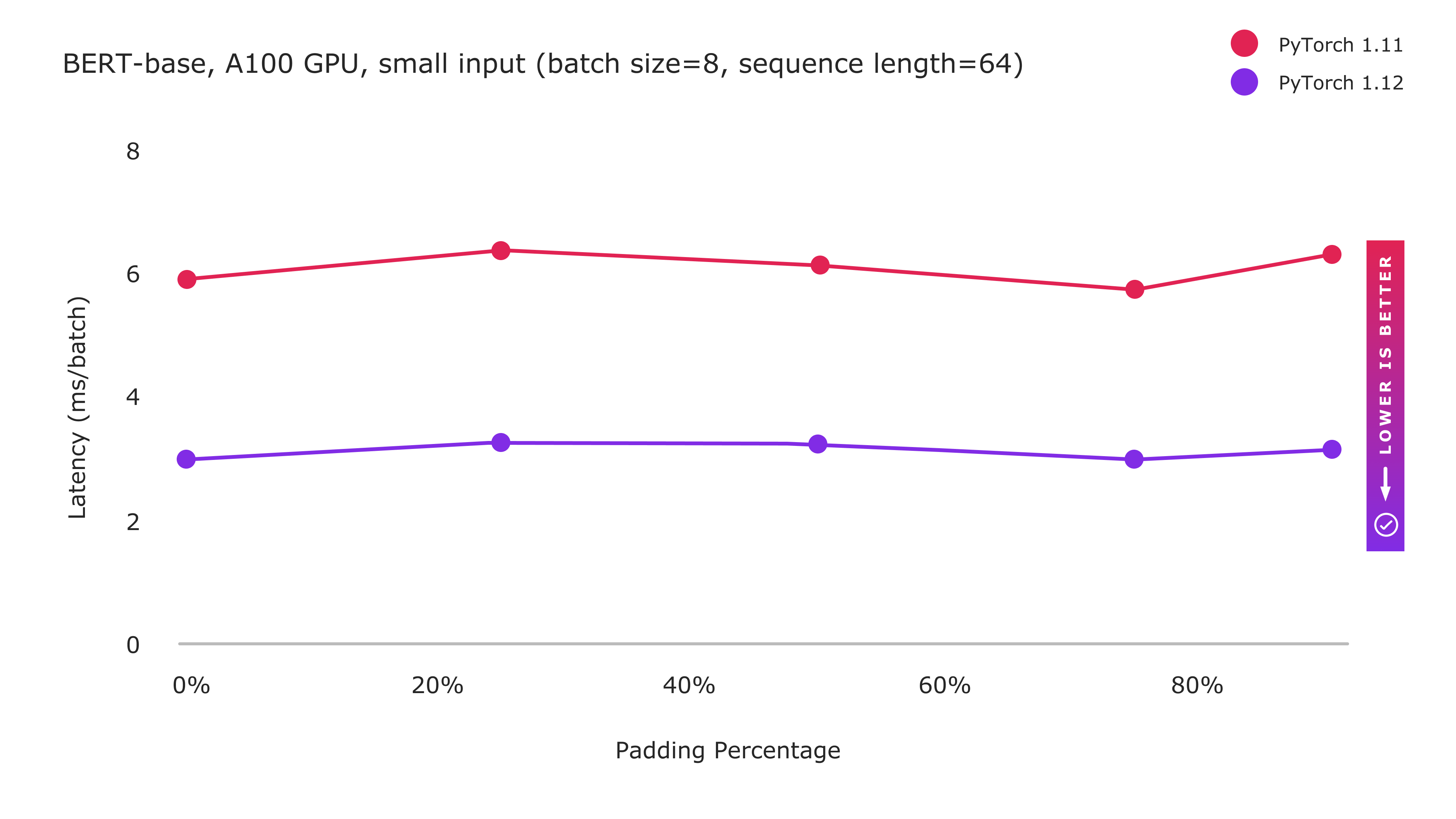

Reduce inference costs on Amazon EC2 for PyTorch models with Amazon Elastic Inference | AWS Machine Learning Blog

Inference mode throws RuntimeError for `torch.repeat_interleave()` for big tensors · Issue #75595 · pytorch/pytorch · GitHub

Abubakar Abid on X: "3/3 Luckily, we don't have to disable these ourselves. Use PyTorch's 𝚝𝚘𝚛𝚌𝚑.𝚒𝚗𝚏𝚎𝚛𝚎𝚗𝚌𝚎_𝚖𝚘𝚍𝚎 decorator, which is a drop-in replacement for 𝚝𝚘𝚛𝚌𝚑.𝚗𝚘_𝚐𝚛𝚊𝚍 ...as long you need those tensors for anything

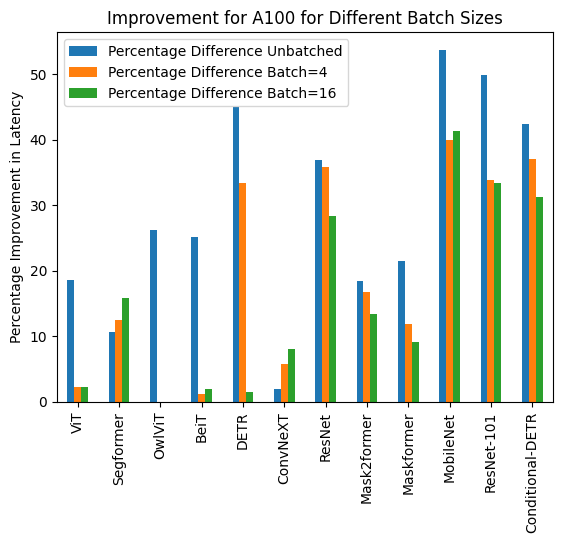

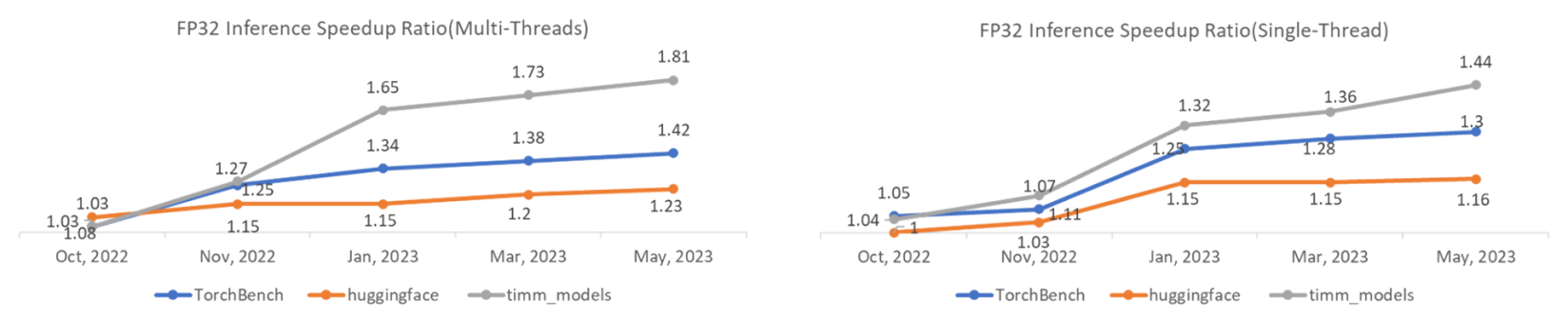

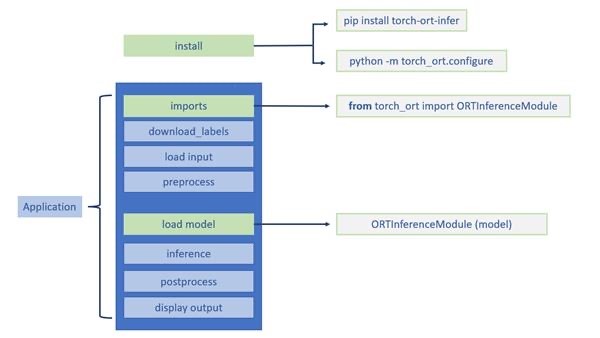

Faster inference for PyTorch models with OpenVINO Integration with Torch-ORT - Microsoft Open Source Blog